In recent years, there has been a steady rise in enterprises that have shifted their data engineering and data science workloads to Managed Cloud-based Processing. Here are a few reasons why:

- Big Data pioneers have significantly lost market share, while Microsoft Azure & other public cloud offerings reported a 200% rise in adoption of its managed Big Data solutions.

- Cloud providers like Azure and AWS, released reference architectures supporting ‘Serverless Compute’ with Spark as the default compute engine for the Data Lake.

- Cloud storage costs are comparable or lesser than on-prem storage cost.

Due to all these reasons, serverless Big Data Processing has subsequently, become the default standard for the data driven and digital oriented enterprise. And so, companies like Databricks and Microsoft Azure have risen in popularity.

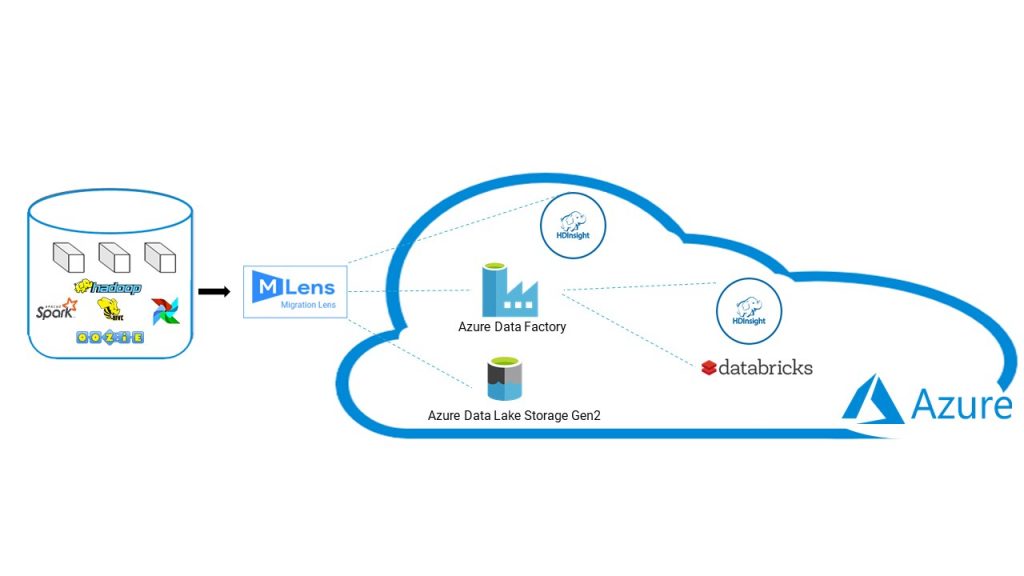

While there are several choices for enterprises shifting towards Managed Services on the Cloud, some of the options centered round Azure include-

- Azure Data Lake with Azure Data Lake Storage Gen 2 as the storage layer and Azure HD Insights as the processing layer.

- Azure Data Lake Storage Gen 2 as the Storage layer, leveraging Databricks or Qubole as the processing layer.

What is MLens?

MLens is an accelerator toolkit from Knowledge Lens which enables automated migration of data and computational workloads from Hadoop cluster (Cloudera/Hortonworks/MapR distributions) to Databricks clusters on Azure or AWS. It provides one-time migration utilities to migrate data, metadata and transform computational workloads in Hadoop environment to Databricks Unified Analytics. It includes assessment report to accelerate both pre-assessment and post-assessment migration.

But, why MLens?

Knowledge Lens has partnered with the likes of Databricks and Microsoft Azure to accelerate our customers’ Big Data adoptions and Migration of Big Data Workloads to Databricks Spark on Azure.

As our technology partner, Microsoft Azure provides capabilities to build cloud-native microservices application, migration of workloads from on-prem or other cloud with cloud economics and efficiency.

As our implementation partner, Databricks brings us diverse capabilities in fields such as Data Decision, Data Science, Data Engineering and Advanced Analytics (AI/ML).

For our customers, this means a single solution for all their key Big Data use cases including Migration, Workload Migration, Automated Disaster Recovery and more.

What’s more, MLens is the only tool on the market that can help you recover point-in-time snapshots of data on Hadoop Clusters; it also supports incremental live migration, which is one of the key elements for a successful migration strategy.

Our Target Customer:

● Who wants to scale storage and compute clusters independently

● Who wants to migrate to Databricks Unified Analytics Platform

● Who wants to modernize the Enterprise Data Lake with Delta Lake

● Who wants to build cloud-native big data apps from legacy on-prem big data applications

● Who wants to migrate more than 50TB of data

● Who wants to use Databricks Spark for their future Big Data Analytics workload consumption

How we achieve this?

- Workshop to discover the existing landscape

- Build the target architecture

- Build the necessary configurations of migratable asset repository

- Generate pre- assessment report using MLens

- Execute actual migration, subject to report findings

- Architectural CoE team aligned throughout the journey of migration

Knowledge Lens has been helping our customers on their Big Data Analytics journey right from its inception. Our deep expertise in Big Data Engineering and Data Science has helped enterprises quickly adopt to the Enterprise Data Lake 3.0. We take pride in our world class MLens Migration Toolkit that has been helping enterprises not only in their Big Data Migration journey but also helping them with Backup, Disaster Recovery and Data Masking solution.

You can try MLens using our free limited-edition license which can migrate 2 TB of data to Azure ADLS Gen2 or Amazon S3 or at high speed over secured firewall, without any intermediate data hops.

For more details and demo of these toolkits, reach out to us here or comment below.